IsaacGym

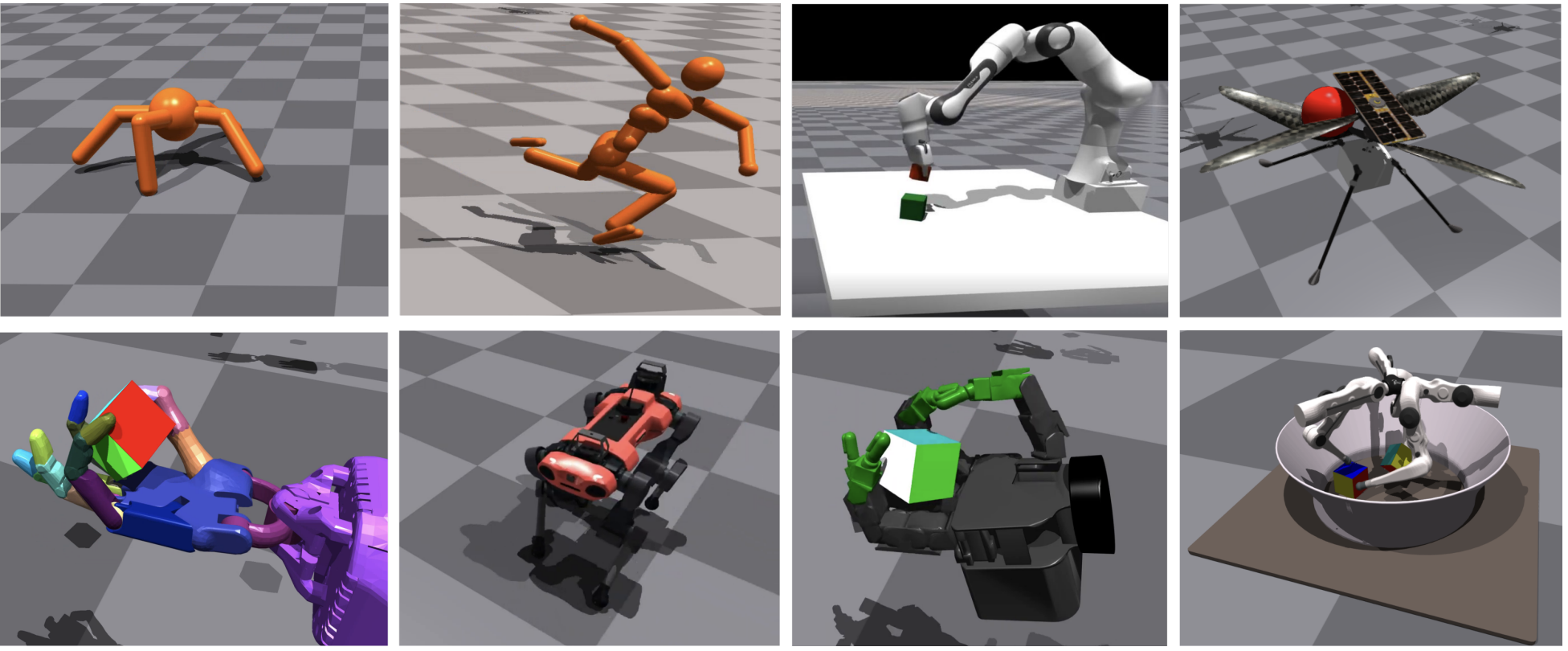

Isaac Gym: High Performance GPU-Based Physics Simulation For Robot Learning

NVIDIA’s physics simulation environment for reinforcement learning research.

Official Materials

Related Materials

Related Benchmarks

IsaacGymEnvs

This repository contains example RL environments for the NVIDIA Isaac Gym high performance environments described in NVIDIA's NeurIPS 2021 Datasets and Benchmarks paper.

Bi-DexHands

Bi-DexHands provides a collection of bimanual dexterous manipulation tasks and reinforcement learning algorithms. Reaching human-level sophistication of hand dexterity and bimanual coordination remains an open challenge for modern robotics researchers.

DexPBT

DexPBT implements challenging tasks for one- or two-armed robots equipped with multi-fingered hand end-effectors, including regrasping, grasp-and-throw, and object reorientation. It also introduces a decentralized Population-Based Training (PBT) algorithm that massively amplifies the exploration capabilities of deep reinforcement learning.

TimeChamber

TimeChamber is a large scale self-play framework running on parallel simulation. Running self-play algorithms always need lots of hardware resources, especially on 3D physically simulated environments. TimeChamber provides a self-play framework that can achieve fast training and evaluation with ONLY ONE GPU.

Related Projects

- SIGGRAPH2023: CALM: Conditional Adversarial Latent Models for Directable Virtual Characters: IsaacGym;

- ICCV2023: UniDexGrasp++: Improving Dexterous Grasping Policy Learning via Geometry-aware Curriculum and Iterative Generalist-Specialist Learning: IsaacGym; RGB-D PointCloud

- CoRL2023: Dynamic Handover: Throw and Catch with Bimanual Hands: IsaacGym; RGB

- CoRL2023: Sequential Dexterity: Chaining Dexterous Policies for Long-Horizon Manipulation: IsaacGym; RGB-D; PointCloud

- CORL2023: Curiosity-Driven Learning of Joint Locomotion and Manipulation Tasks: IsaacGym; RL

- CoRL2023: General In-Hand Object Rotation with Vision and Touch: IsaacGym; RGB-D

- CoRL2023: Fleet-DAgger: Interactive Robot Fleet Learning with Scalable Human Supervision

- CVPR2023: UniDexGrasp: Universal Robotic Dexterous Grasping via Learning Diverse Proposal Generation and Goal-Conditioned Policy: IsaacGym; RGB-D PointCloud

- CVPR2023: PartManip: Learning Cross-Category Generalizable Part Manipulation Policy from Point Cloud Observations: Isaac Gym; RGB-D PointCloud

- ICRA2023: RLAfford: Official Implementation of "RLAfford: End-to-end Affordance Learning with Reinforcement Learning: IsaacGym

- ICRA2023: GenDexGrasp: Generalizable Dexterous Grasping: IsaacGym; RGB-D; PointCloud

- ICRA2023: RLAfford: End-to-End Affordance Learning for Robotic Manipulation: IsaacGym; RGB-D; PointCloud

- ICRA2023: ViNL: Visual Navigation and Locomotion Over Obstacles: IsaacGym;

- RSS2023: AnyTeleop: A General Vision-Based Dexterous Robot Arm-Hand Teleoperation System: IsaacGym

- RSS2023: DexPBT: Scaling up Dexterous Manipulation for Hand-Arm Systems with Population Based Training: IsaacGym

- RSS2023: Rotating without Seeing: Towards In-hand Dexterity through Touch: IsaacGym

- ScienceRobotics2023: Visual Dexterity: In-Hand Reorientation of Novel and Complex Object Shapes: IsaacGym; RGBD; PointCloud

- ICML2023: On Pre-Training for Visuo-Motor Control: Revisiting a Learning-from-Scratch Baseline: IsaacGym; RGB

- ICML2023: Parallel Q-Learning: Scaling Off-policy Reinforcement Learning: IsaacGym;

- SIGGRAPHAsia2022: PADL: Language-Directed Physics-Based Character Control: IsaacGym;

- ICRA2023: Real2Sim2Real: Self-Supervised Learning of Physical Single-Step Dynamic Actions for Planar Robot Casting: Isaac Gym;

- CoRL2022: In-Hand Object Rotation via Rapid Motor Adaptation: IsaacGym

- CoRL2022: Legged Locomotion in Challenging Terrains using Egocentric Vision: IsaacGym

- NIPS2022: Towards Human-Level Bimanual Dexterous Manipulation with Reinforcement Learning: IsaacGym; RGB-D; PointCloud

- ICRA2022: Data-Driven Operational Space Control for Adaptative and Robust Robot Manipulation: IsaacGym

- RSS2022: Rapid Locomotion via Reinforcement Learning: IsaacGym

- RSS2022: Factory: Fast contact for robotic assembly: IsaacGym

- SIGGRAPH2022: ASE: Large-scale Reusable Adversarial Skill Embeddings for Physically Simulated Characters: IsaacGym

- CoRL2021: STORM: An Integrated Framework for Fast Joint-Space Model-Predictive Control for Reactive Manipulation: IsaacGym

- ICRA2021: Causal Reasoning in Simulationfor Structure and Transfer Learning of Robot Manipulation Policies: IsaacGym

- ICRA2021: In-Hand Object Pose Tracking via Contact Feedback and GPU-Accelerated Robotic Simulation: IsaacGym

- IROS2021: Reactive Long Horizon Task Execution via Visual Skill and Precondition Models: IsaacGym

- ICRA2021: Sim-to-Real for Robotic Tactile Sensing via Physics-Based Simulation and Learned Latent Projections: IsaacGym

- RSS2021_VLRR: A Simple Method for Complex In-Hand Manipulation: IsaacGym

- CoRL2021: Learning to Walk in Minutes Using Massively Parallel Deep Reinforcement Learning: IsaacGym

- ICRA2021: Dynamics Randomization Revisited:A Case Study for Quadrupedal Locomotion: IsaacGym

- NIPS2021: Isaac Gym: High Performance GPU-Based Physics Simulation For Robot Learning: IsaacGym

- RAL2021: Learning a State Representation and Navigation in Cluttered and Dynamic Environments: IsaacGym

- CoRL2020: Learning to Compose Hierarchical Object-Centric Controllers for Robotic Manipulation: IsaacGym

- CoRL2020: Learning a Contact-Adaptive Controller for Robust, Efficient Legged Locomotion: IsaacGym

- RSS2020: Learning Active Task-Oriented Exploration Policies for Bridging the Sim-to-Real Gap: IsaacGym

- ICRA2019: Closing the Sim-to-Real Loop: Adapting Simulation Randomization with Real World Experience: IsaacGym

- CoRL2018: GPU-Accelerated Robotics Simulation for Distributed Reinforcement Learning: IsaacGym